As legal professionals move into widespread GenAI adoption, agentic AI promises greater autonomy in legal workflows — but it brings new oversight and ethical challenges that demand clear oversight and targeted education

Key takeaways:

-

-

-

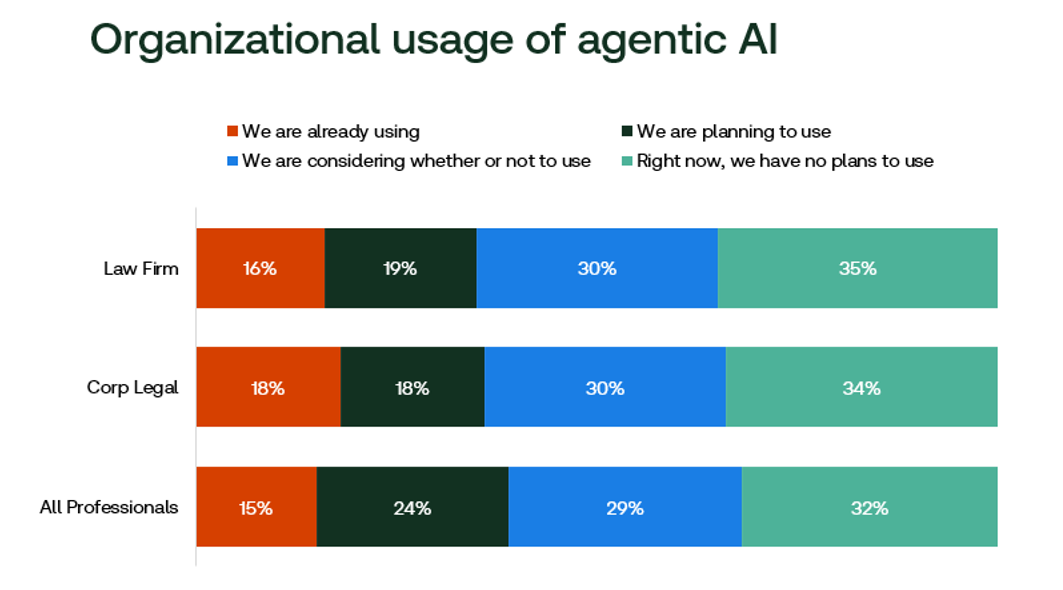

Agentic AI poised for adoption uptick — Agentic AI is following GenAI’s rapid adoption in the legal industry, with less than 20% of firms currently implementing agentic systems but half planning or considering adoption in the near future, according to a new report.

-

Adoption depends on human oversight answers — Legal professionals are generally optimistic about agentic AI’s potential, but successful adoption depends on explicit guidance about human oversight and the lawyer’s role in maintaining ethical standards.

-

Time to retool AI education? — Agentic AI’s increased autonomy introduces new oversight and ethical challenges for law firms, making targeted education and clear guidance essential to understanding the differences from GenAI.

-

-

Over the past several years, law firms and corporate legal departments have turned towards generative AI en masse. At the beginning of 2024, just 14% of all law firms and legal departments featured an enterprise-wide GenAI tool. Just two years later, that number had already risen to 43% of all firms and departments, according to the 2026 AI in Professional Services Report, from the Thomson Reuters Institute (TRI). For large law firms or legal departments, those percentages — not surprisingly — are beginning to approach 100%.

With GenAI adoption now this widespread, legal industry leaders are now turning their attention to two primary initiatives. One, of course, is how to get the most out of the AI tools they already have — a task that is proving a bit elusive. Currently, less than 20% of lawyers say their organizations measure AI’s return-on-investment, and most corporate lawyers say they have no idea how their outside law firms are approaching AI. Thus, instituting not just AI tools, but also an AI strategy is the second top priority for law firms and corporate legal departments in 2026 and beyond.

However, even as the legal industry reaches a tipping point in adopting GenAI tools, technology innovation still continues unabated. Agentic AI has emerged as the next wave of innovation that could change how lawyers work on a daily basis, offering a way to autonomously complete multi-step tasks. For example, agentic AI systems are already being built for the legal industry that independently researches a regulation or law, drafts a document based on the finding, identifies pitfalls, and revises the document, with stops for human guidance only instituted as desired.

According to the AI in Professional Services Report, the legal industry is already making headway towards implementing agentic AI systems. For agentic AI to truly take hold in legal, however, lawyers still require more education around not only how it differs from the GenAI systems they already have in place, but also when and where human intervention needs to occur within an agentic system.

The early stages of agentic AI

Examining current agentic AI adoption for the legal industry almost takes one back in time — two years, to be exact. Following the public release of GenAI in late-2022, many legal industry organizations spent 2023 evaluating and experimenting with AI systems, usually with a small working group of interested guinea pigs. As a result, only 14% of survey respondents said their law firms or corporate legal departments were engaged in organization-wide GenAI rollouts at the start of 2024. However, more than half of respondents said their organizations expected to be rolling out large-scale GenAI systems over the next 1 to 3 years. The intervening two years since then have proved that prediction to be largely true.

Agentic AI usage in the first half of 2026 looks largely similar to GenAI in 2024. The legal industry started to experiment with agentic AI at the beginning of 2025, with an eye towards actual implementation in 2026 and beyond (particularly as legal software providers began to integrate agentic systems into their own products). As such, less than 20% of recent survey respondents say their organization is engaged in widespread agentic AI adoption, but with about half of respondents said their organization is either planning to use or considering whether to use agentic AI in the near future.

By and large, lawyers feel positive about the agentic AI movement. When asked about their sentiment towards agentic AI, 51% of legal industry respondents said they felt excited or hopeful, while just 19% said they felt concerned or fearful. Further, about half (47%) said they actively believe agentic AI should be used for legal work, while 22% felt it should not, with the remainder saying they were unsure. These figures largely track with the sentiments expressed about GenAI in 2024, which have only grown over time from about 50% positive two years ago to two-thirds of all legal professionals feeling positive currently.

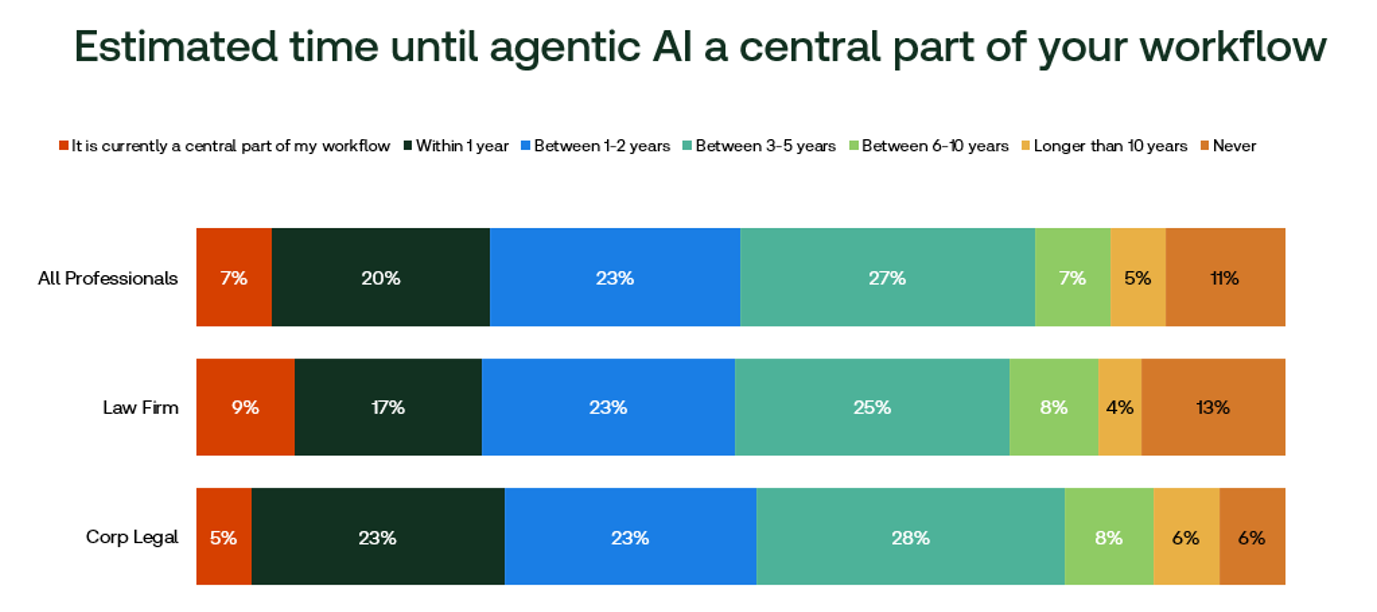

This all lends further credence to a rise in agentic AI usage similar to what law firms and corporate legal departments experienced with GenAI over the course of 2024 and 2025. Indeed, when asked when they expect agentic AI to be a central part of their workflow, few have baked agentic systems into their daily work currently, but a majority of legal industry respondents expect it to be central within the next 3 to 5 years.

The unique barriers of agentic AI adoption

Agentic AI does differ from GenAI in one crucial area that may limit its growth potential within the legal industry, however — autonomy. By and large, GenAI systems operate on a back-and-forth basis: Users provide the tool a prompt, receive its output, and then iterate back-and-forth from there. Agentic AI is intended to be more automated by design, only requiring human input at pre-determined points in the process. And that makes some lawyers understandably nervous.

When asked why they might feel hesitant about using agentic AI for legal tasks, the most common answer was a general fear of the unknown, but the second most common answer dealt with the need for careful monitoring and oversight. In fact, some respondents said they were excited about GenAI, but more cautious about agentic AI’s potential.

“Agentic AI, while exciting, to me removes oversight a step too far,” said one such lawyer from a US law firm. “I like the idea of prompting and reviewing a result. It is something else to have a machine have so much autonomy in the actual doing of a thing and potentially acting on my behalf without that very concrete review.”

You can learn more about the impact of AI on professional services organizations at TRI’s upcoming 2026 Future of AI & Technology Forum here

An assistant GC at a US company also pointed to potential privacy and security concerns, adding: “The fact that agentic AI operates in a much more autonomous way, with a lack of control from the user, means there are many unknowns that are hidden beneath the process.”

For law firm and corporate legal department leaders looking to potentially implement agentic AI systems into their practice, this means re-thinking what AI education and training will mean moving forward. Beyond that, however, legal AI educators also will need to make sure to pinpoint and perhaps over-explain those specific instances in which human oversight needs to occur in agentic systems. More autonomous does not mean fully autonomous, and particularly for lawyers with ethical duties to their work product, lawyer oversight will in fact be a necessary part of any agentic system.

For law firm or legal department leaders, that means that finding the right balance between efficient workflows and human intervention will be key to agentic AI adoption. And those organizations that can best communicate human-in-the-loop to their professionals up-front will be rewarded with more increased and reliable adoption.

Clearly, lawyers feel positively about the agentic AI future, after all. They just need it spelled out explicitly as to what the lawyer’s role will be in this new paradigm.

“Agentic AI is powerful, but its moral compass must come from humans,” one UK law firm barrister noted aptly. “Lawyers are trained to safeguard fairness, rights, and the rule of law — principles that should guide how AI is designed, governed, and deployed. Hope lies in our ability to shape AI through these values for fairer values for society as a whole.”

You can download a full copy of the Thomson Reuters Institute’s 2026 AI in Professional Services Report here