As AI accelerates along an exponential curve of features and intelligence, there are hidden risks and opportunities that require active governance

The acronym of AI (artificial intelligence) has become like the air — it is all around us, and touches everything we do. Indeed, the advancements of AI are highly efficient, increase revenues, and leverage humans-in-the-loop. However, when it comes to AI in all its ever-changing, kaleidoscope of forms, its growing functionalities, its demands for data, and its advancing intelligence, who is responsible for creating, managing, and retiring the roadmaps of integration?

Simply put, how do all these AI solution pieces fit together or even talk to each other? What happens when there is a need to audit the cascading inputs and outputs or implement error-corrections? Is there any way to identify AI-created data from traditional systems? While uniquely different, AI rapid-growth is exposing the factures and fallacies of cascading upstream and downstream integrations — and our ability to assess quality, accuracy, and even systems-of-record. Indeed, history is repeating itself.

The future of tomorrow requires a proactive integration of innovative research tempered by domain market forces, consumer behaviors, AI technology (such as chips and software) and digital data explosions all glued together by security, legal, and regulatory requirements. It is a future that demands layers of integrated solutions all requiring transparency, heterogeneity, and risk-attributions.

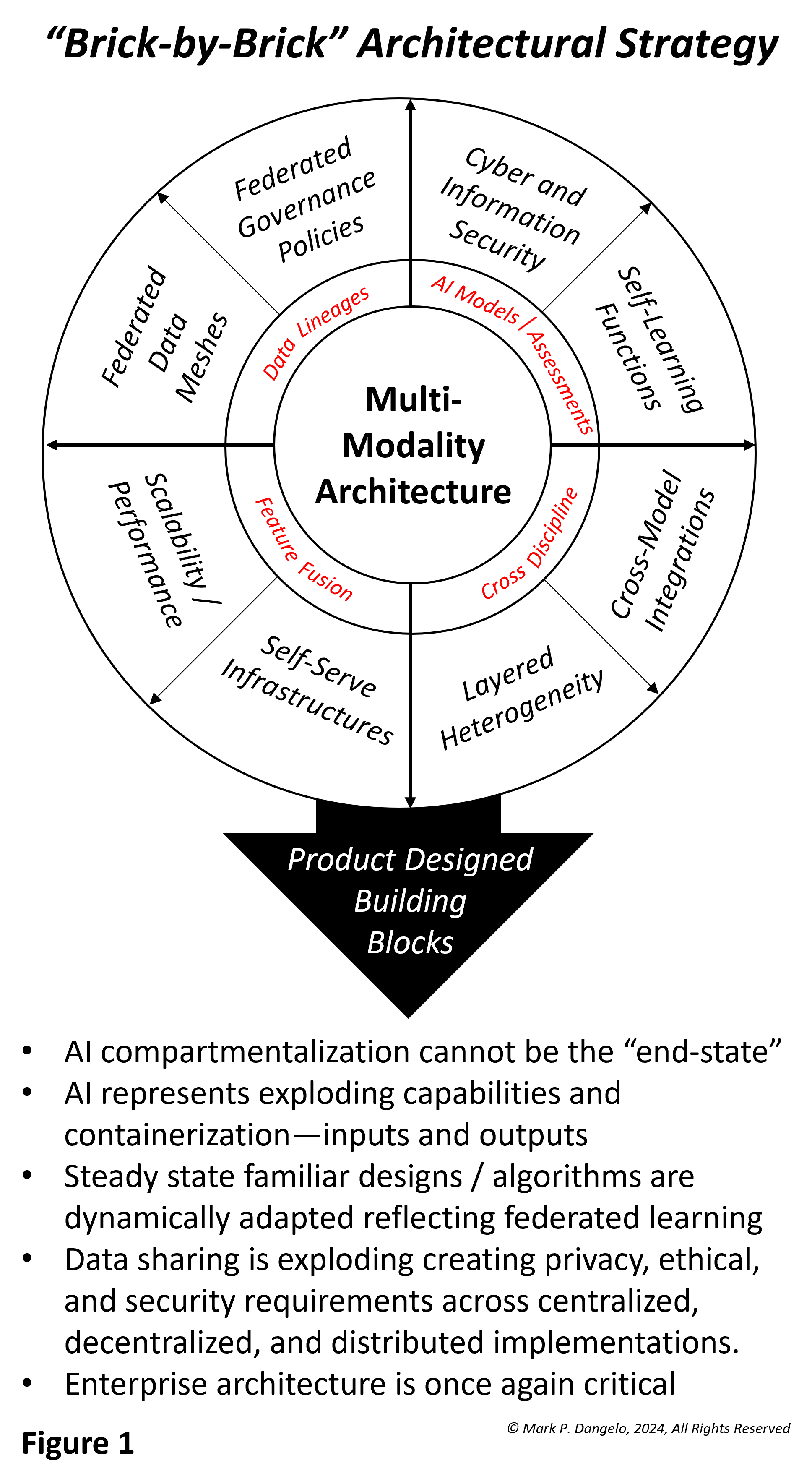

At its core, AI is a data-driven solution. At its edges, AI represents an ability to extend data ideation using building blocks of functionality uniquely assembled — but how? Let’s discuss an illustrative representation of delivering AI governance by design rather than the traditional siloed product mindsets of one-and-done.

The above graphic illustrates the macro-segmentation that is required in an Age of AI often delivered using Agile methods underpinned by industry-defined, universal data models. Yet, even while learning from legacy mistakes, the introduction of AI solution sets still creates both opportunities and challenges.

-

-

- AI-impacted legacy tech — The burden of repairing the existing impacts of fragmented, siloed legacy systems is estimated to be fix that could cost up to $2 trillion, with profit and operational losses fast approaching $3 trillion per year.

- Data “bar codes” — This represents an easy-to-understand solution that is complex in implementation: From where does the data we use to make decisions, impact operations, or report to investors originate? If regulators, auditors, or legal personnel asked for the traceability of the inputs, can the current or future AI solutions meet the due diligence requirements?

- Interoperability and trust — A core tenant of vendor packages, the idea of (open-source) APIs and data virtualization dominated the last 25 years of systems even down to mobile applications. Yet, AI introduces production-ready unknowns for scale, validity, performance, and unintended consequences beyond its 2023-‘24 pilots.

- Skills and simplicity — While researchers seek to expand the options of AI and its capabilities, the challenges are where will the skills come from to operate, deliver, and integrate data that is doubling in volume every year — not to mention opaque AI systems provided by vendors, skunkworks, startup efforts, and small-scale prototypes.

-

Making architecture even more challenging, over the last 16 months we’ve seen the traditional segmentation between industry results and research — internal or academic. Today, hundreds of AI solutions are being developed every week with investment values and M&A actions dominating strategies that represent the front-office fear, uncertainty, and doubt of being left behind.

The complexities of AI, its vast ability to disintermediate processes, and its data foundation require strategies and architectures that weave together rapidly evolving technologies all impacting organizational change and the skills within. What is the approach, design, or outcome? From business leaders, what will it cost compared to the risks of non-compliance?

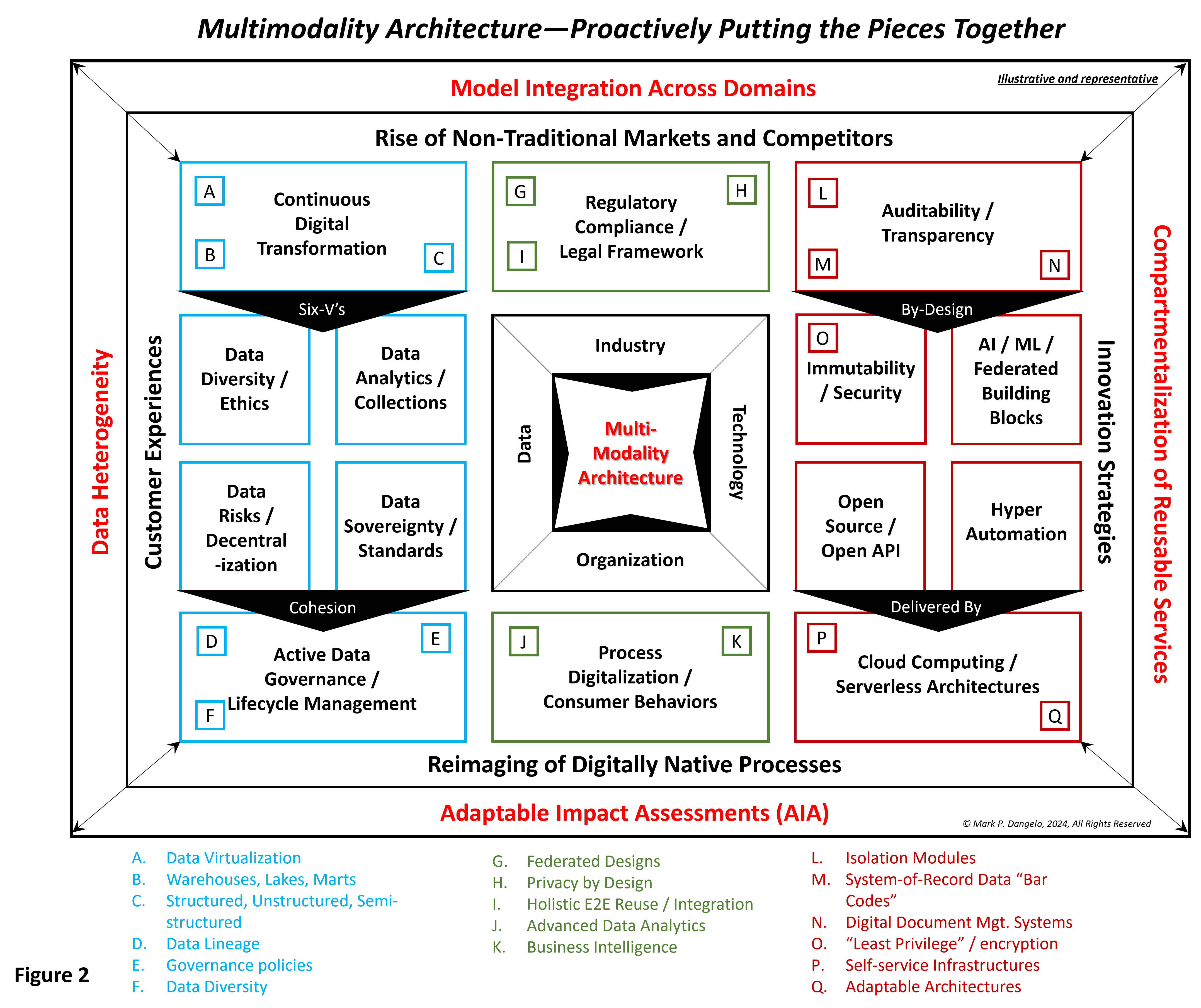

Around these two questions comes a set of emerging designs to actively managed, multi-modality products and capabilities linked across a fabric of solutions that are analogous to using Legos. The graphic below represents a comprehensive blueprint to actively address the demands of AI governance encased within result-oriented, business-demanded delivery. It indeed is a picture representation worth 10,000 words and numerous upskilling industry and research priorities.

When studying the shock of this graphic, business leaders see complexity and divisional chaos. For larger firms across highly regulated industries such as banking and financial services, these designs are already being discussed across their multibillion dollar budgets and among their thousands of IT staff members. For midsize and smaller firms, designing a multimodal architecture against a blueprint of design seems impossible at first glance.

Yet, development of these blueprints or fabrics has already been done. Looking across industries and disciplines we can see examples from privacy-by-design solutions, outsourcers seeking to modernize their products for consumers, or brand-name consulting leaders who are proactively not just engaging their customers but assembling solutions for them that meet their future needs.

For researchers in industry and academics, their deep understanding of each unique area provides the roadmaps for adaptation in the face of hyperscale and rapid-cycle technologies. This is where corporate leaders who are driven by results must balance what is available with what is possible when the innovation cycles for AI advancements are now measured in weeks and months rather than years. These AI realities, coupled with regulators and their exploding oversight, demand more than the traditional adoption of siloed regulatory technology to create governance solutions.

Only with a holistic approach to AI architecture will enterprises and their researchers arrive at a workable and efficient solution to regulation. Regulation is the glue that demands integration, and integration itself is demanded by fragmented solutions. Indeed, solutions ensure that the enterprise can be profitable in the face of opaque and new market forces. And linking them all together will be unfamiliar, but it is the solution that cannot be left to chance.

While industry and research personnel want AI to be simple, that represents an assumption that there is total transparency and recourse even if we use natural language interfaces. AI is not a magic button that operates in a vacuum — industry and researchers have already tried this repeatedly and it is a recipe for future chaos.

To ignore rapid-cycle AI progressions as a business leader is problematic, and failing to integrate disparate technological solutions is déjà vu. The complexity and confusion of AI is just beginning, yet the rush to deep research and fast results is bringing back the ghosts of prior step-functional shifts of innovation and computer advancements.

In the end, AI will be about architectural adaptability — not just products, technology, data, or even regulators. AI is blending of next generation of demands for strategy and architecture. Those industries and firms that embrace the transformative paradigm will likely come to represent the future of leadership.